Unveiling the AI Future: Highlights from NVIDIA's GTC 2024

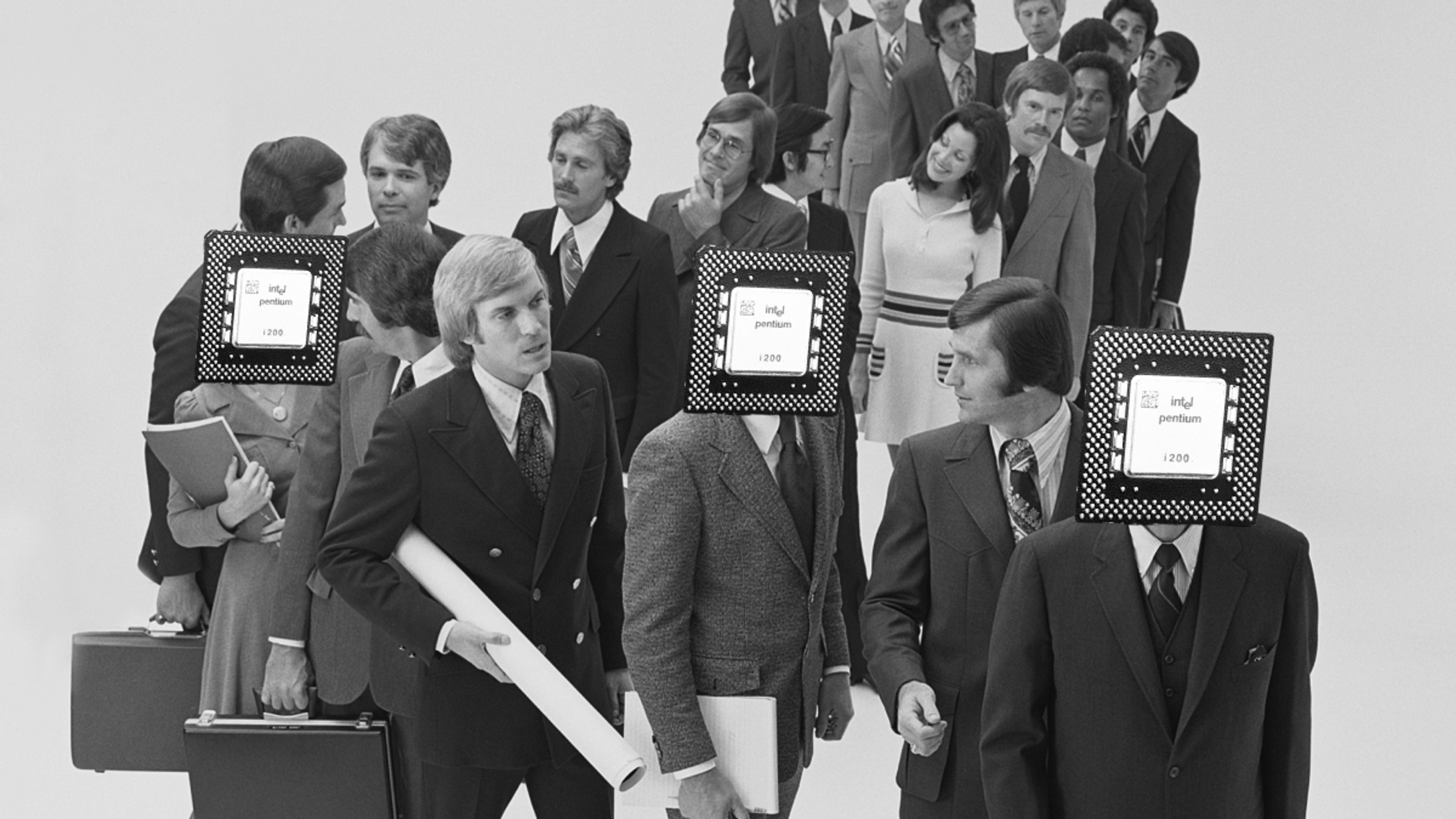

Good to be back on the floor, massive conference

After a nearly 5-year absence, NVIDIA’s GPU Technology Conference (GTC) was finally back this month in person and in full force. The difference is incredible: 5 years ago, the conference was a blend of gaming and enterprise AI themes, with a lot of the talks and presentations revolving around the latest graphics performance coming from the GeForce 20 series, how to leverage CUDA in the data center, and teasing out the DGX A100. Fast forward to today, and GTC is now the premiere AI and AI infrastructure developer conference in the world.

The rise of NIMs – Making AI science easier along with NeMo

NVIDIA uses GTC to showcase their vision and announce new products and technologies. This year the announcements rolled out on the software side starting with sets of NIMs (NVIDIA Inference Microservices) which are modular, containerized engines designed to take advantage of existing foundation models and then easily layer services over them. The launch also included specialized NIMs for specific business areas such as healthcare. When combined with the NeMo development platform, this can accelerate building AI pipelines significantly, but with a caveat: NIM integration is all built on top of CUDA libraries and is optimized for NVIDIA hardware. If you’re looking elsewhere for your GPUs, you’ll have to build your own pipeline independently. For more insights on NIM and why it changes the game, see Phil Curran’s blog.

Omniverse

NVIDIA also showed off the evolution of their Omniverse tools and APIs, allowing 3D rendering to be highly integrated with user tools to create route optimizations, visual object simulation and creation, and more. NVIDIA’s vision for this is that Omniverse will be the path to create full digital twins of environments that can then be acted upon by AI to test and simulate real-world scenarios in a digital environment. They even showcased a pre-built, high-resolution digital twin called Earth-2 that's available to help with geophysical and climate science simulations. The big announcement in this space was the availability of Omniverse as a set of cloud APIs, allowing you to choose which of the Omniverse tools you want to run without having to deploy the full enterprise set. This allows all of the capabilities to be rented out as needed without having to buy complete infrastructure such as a full OVX environment.

Speaking of OVX, this segues into the star of the show: New hardware and systems. The OVX platform has been updated to include not just L40 GPUs, but also BlueField-3 DPUs and appropriate networking to create what could be characterized as almost a “rendering SuperPOD” for digital twins. This scaleout approach is interesting, because now there is a direct tie to plugging a generative AI and simulation training and inference platform such as SuperPOD that can take input and then apply it to an OVX environment to render the results out. This ties into the rise of the “AI Factory ” where NVIDIA has a vision of end-to-end data and AI token generation that become the currency of a digital infrastructure. If you have both the data science tools, the pipelines to process the data, and the hardware to do it, you now have the ability to generate data outcomes as a business. More on this in a little bit…

B100(Blackwell) chip and DGX GB200 Systems

NVIDIA also introduced the new “Blackwell” GPU. Named after noted statistician and mathematician David Blackwell. (Not to be confused with the David Blackwell who works for WEKA and wishes he was getting royalties on the GPU name.) When two Blackwells and one ‘Grace’ CPU are connected together, they form the new GB200 superchip, with 384GB of HBM3e vRAM and 40 petaFLOPS of performance. NVIDIA then unveiled the new physical architecture: Water-cooled trays of processors that slide into a custom rack up to 36 at a time. To CUDA, this all appears as if it’s one giant modular GPU, capable of 1.4 exaFLOPS in a single rack. This is mind-boggling performance when you consider that just 2 generations ago, the DGX A100 system with 8 GPUs onboard was capable of only 5 petaFLOPS. Combine this with the new NVlink switching, fast CX-8 800GB/s networking, and you have an incredible beast of a system.

One specific comparison during the keynote was inference speed. Blackwell is 30 times the inference performance of the H100 ‘Hopper’ chip. This makes for an interesting observation: A full DGX GB200 NVL72 system rack is 120Kw of power consumption, or ~1.6kw per Blackwell. A DGX H100 system with 8 GPUs on the other hand is 10.2Kw of power, or ~1.2Kw per Hopper. These are numbers that include all ancillary system power consumption as well. Since Blackwell has more than twice as many transistors as Hopper, this implies that the compute is very efficient and performance dense, and that there is significantly lower power per FLOP with Blackwell. The remaining power is being used for all the ancillary infrastructure (DPU, switching, cooling) in both systems.

Sustainability becomes an issue at this point. With electricity production rising at slower rates than the power consumed, it’s creating power shortages at the data center level. Utilities are struggling to have the infrastructure or generation to power the datacenters that are serving these GPU systems. It’s reaching a point where power is the new currency of the AI economy. If these systems are pulling that much power, and also having to deal with cooling, then in order to make sure that you’re getting the most out of them, the ancillary infrastructure MUST be able to pump data into these DGX systems as efficiently as possible in order to keep them running at maximum utilization. Otherwise, an idle systems’ ancillary components will still consume tremendous amounts of power.

WEKA - Feeding the Blackwell beast

Blackwell is an amazing feat in its own right, with one particular item standing out to us: A full DGX NVL72 system can have up to 144 CX-8 NICS running at 800Gb/sec. That’s right, a full DGX rack can have up to 14.4 or 28.8 TB/sec throughput. (We’re not sure yet if CX8 is 800Gb per NIC or per port as the final specs have not been released.) Performance like that needs to have complementary systems in place to make it run as fast and efficiently as possible. WEKA has had several announcements leading up to and at GTC that show how we can help out with the performance density and ultimately advance the sustainability of AI. We started by announcing BasePOD certification on H100 back in early February, then followed it up with both SuperPOD certification and the release of the new WEKApod™ appliance at GTC itself. WEKApod is the system used for the BasePOD and SuperPOD certification. WEKApod has extremely high performance of over 720GB/s and 18 million IOPs and latencies in the sub-150us range, making it capable by NVIDIA’s standards of powering over 96 DGX H100 systems (768 GPUs) in its base configuration of 1 Petabyte of storage in 8 nodes. It can also be easily expanded to linearly scale up as needed to handle up to 1024 DGX H100 systems. Why this is so critical is that a base configuration WEKApod draws as little as 8Kw of power in operation compared to other systems which could take up to 50Kw to achieve similar performance results. With the criticality of providing power to the current and next generation of AI systems as efficiently as possible, WEKApod stands out as being able to reduce the footprint of storage needed to ‘feed the beast’ of AI GPUs.

Wrapping it All Up

Taking all of the amazing GTC news into consideration, here are the big takeaways:

NVIDIA with NIM and NeMo has now created a full vertical business model for AI: Optimized models pre-packaged for use, data science tools and frameworks that are pre-packaged for use, and the latest and fastest hardware for it all to run on. The challenge is that you can only run those optimized tools and models on NVIDIA hardware as they are all CUDA dependent, so there's a bit of lock in. If you want to take them elsewhere, you’ll need to do extensive additional work.

NVIDIA DGX cloud and Omniverse cloud combined now give you the ability to rent infrastructure and include all the optimizations for tools and more, directly from NVIDIA. This provides a rapid gateway to start small and then expand as needed into either other cloud or GPUaaS providers, or back into on-prem hardware as it becomes available.

Power is the new currency of AI. The realities of power consumption and the lack of available power to get into data centers means there will be entire data centers that will be unable to host this next wave of expansion for AI systems. The future trend will be that only the centers that can get enough power will host the next generation of GPUs. Expect only full-scale data centers at the scale of Equinix, Amazon, Google, Azure, and some of the GPUaaS providers to be able to deploy the latest DGX GB200 NVL72 systems, resulting in very coarse at-scale distributions of the latest hardware to these operators only. It will be challenging to get 120Kw per-rack power density and a water cooling plant put into even mid-size data centers.

WEKA leads the way in performance density and sustainability. Now that we are certified for SuperPOD and BasePOD deployments to make integration even easier for our customers, WEKA’s new performance-efficient WEKApod can help optimize data delivery to these new GPUs. The result is a reduced power consumption profile, and faster time-to-results for the largest GPU farms in the world.

That’s our wrap-up on GTC. And even though David isn’t getting royalties off of the Blackwell name, spare a moment and take a look at the work he’s done building cool container integrations with WEKA, and maybe buy him a drink as a consolation if you meet him. He’ll appreciate it. :-)

What's Next

Scale Production AI Faster with NeuralMesh

Your models aren't slow. Your data is. Fix AI bottlenecks with high-throughput infrastructure.