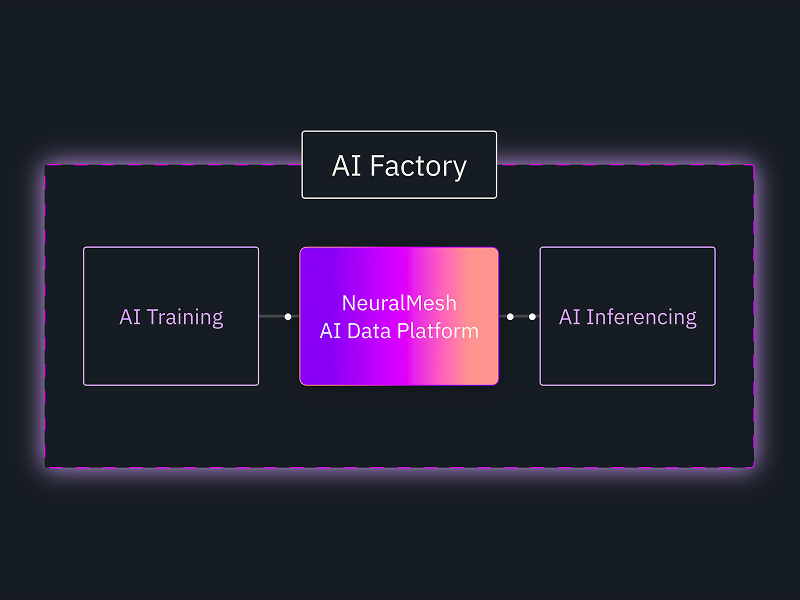

AI Factories Accelerated

The easiest way to deploy an AI factory at scale.

Faster Time-to-Outcomes

Unlock enterprise data for your AI Factory without the integration work that slows teams down, so teams can focus on delivering results.

Turnkey Deployment

Deploy a fully validated AI data platform — without the heavy lifting of assembling and testing one from scratch.

Deploy Data Anywhere

Operate consistently across on-premises, cloud, and hybrid environments so data and deployments scale without compromise.

What Our Partners Are Saying

“The deployment of agentic AI in production demands a new focus on managing the continuous, coherent flow of data and inference context. By leveraging the NVIDIA AI Data Platform, solutions like WEKA’s NeuralMesh AIDP deliver the persistent context tier necessary for stable and high-scale

agentic inference.”

“The real challenge in AI is no longer training models. It is running them reliably in production, at scale, with predictable performance and cost. That’s where most AI initiatives stall. The NeuralMesh AI Data Platform integrates with our AI Acceleration Cloud, Neysa Velocis, to solve that problem directly. It gives teams a way to run AI workloads as dependable systems, without carrying the operational burden of stitching together complex infrastructure.”

“Getting AI to production requires more than technology— it requires consistency and control. By using the NeuralMesh AI Data Platform with Red Hat AI Enterprise, based on Red Hat OpenShift, organizations can run data-intensive AI pipelines across on-premises and cloud environments at the scale that enterprise production demands, without sacrificing governance or security.”

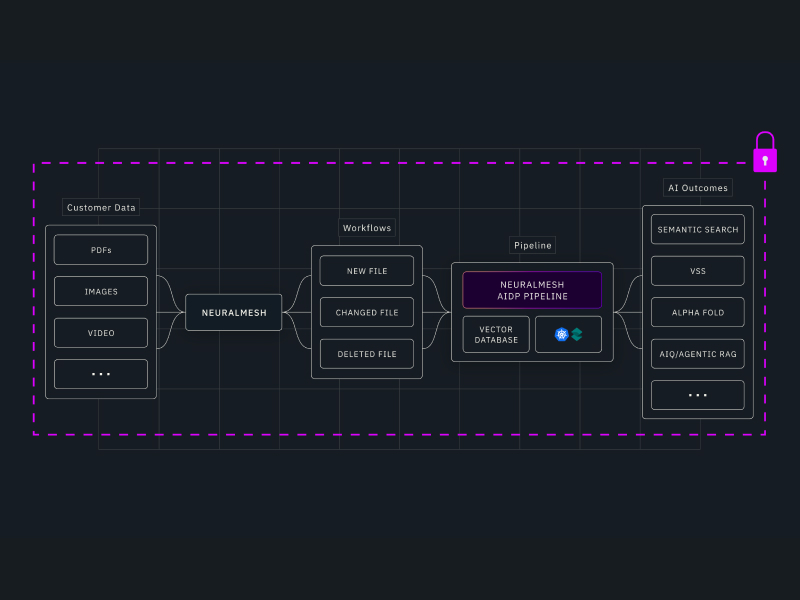

Demo: Turn Raw Data into Pure Intelligence

See how enterprise data becomes AI-ready in seconds with WEKA NeuralMesh AIDP. This demo shows secure ingestion, automatic vectorization, and real-time querying that turns raw data into actionable insights.

Dive Deeper

Common Questions, Straight Answers

NeuralMesh by WEKA is high-performance, software-defined storage built for the demands of AI factories. NeuralMesh AIDP goes further — it deeply integrates NeuralMesh with the NVIDIA AI Factory stack to deliver a complete AI data platform with automated data pipelines, unified data management, and built-in access controls. The result is a production-ready solution that gives enterprises the performance of NeuralMesh and the depth of NVIDIA integration, without the complexity of building and validating it themselves.

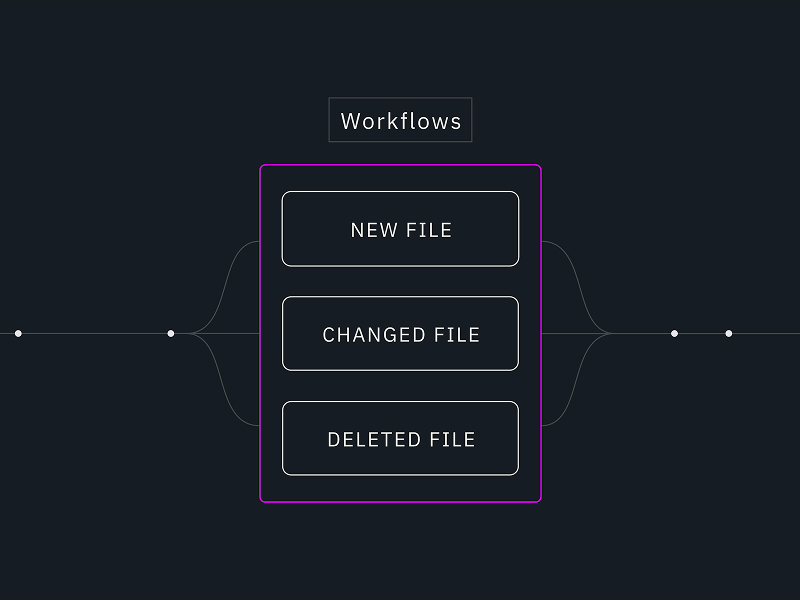

Most enterprises have data, but getting that data into a form that AI can actually use — and keeping it current as data changes — requires significant engineering effort. NeuralMesh AIDP solves that by managing the entire data pipeline automatically, from ingestion to query, so AI agents and models always have access to accurate, up-to-date information without manual intervention.

NeuralMesh AIDP is built for enterprises deploying AI Factories at scale — particularly teams that need to move from AI pilot to production quickly, without building and validating a custom data infrastructure from scratch. If your organization is running or planning NVIDIA-based AI infrastructure and needs a data platform that’s production-ready on day one, NeuralMesh AIDP is designed for you.

NVIDIA’s AI Factory blueprint defines the architecture. NeuralMesh AIDP delivers it — with deep integration into the NVIDIA stack that goes beyond storage. Automated embedding pipelines keep your data AI-ready as it changes. A unified data environment eliminates silos across training, retrieval, and inference. Day 2 operations are handled automatically. And access controls are enforced at query time so you can expand AI access without compromising security. The result is an AI data platform that’s built to perform in production, not just in pilots.

NeuralMesh AIDP is built to close the gap between AI pilot and AI production. In practice that means faster time to working AI applications, better GPU utilization because data is always available when compute needs it, and the confidence to scale AI workloads across your organization without rebuilding infrastructure every time. For teams that have struggled to move AI initiatives beyond the proof of concept stage, NeuralMesh AIDP is designed to remove the data infrastructure barriers that usually stall that progress.

NeuralMesh AIDP is delivered as a pre-integrated platform — validated hardware and software components that are ready to deploy together. This means your team skips the months of assembling, testing, and operationalizing an AI data platform from scratch and gets straight to running AI workloads. The heavy lifting is done before it arrives.

NeuralMesh AIDP runs consistently on-premises, in the cloud, or in hybrid environments. Data stays where it needs to be, and deployments scale as your AI Factory grows — without architectural trade-offs or starting over every time your infrastructure evolves.

Every query is authenticated and executed according to data permissions and admin-defined policies, so users only access what they’re authorized to see. This means enterprises can expand AI access broadly across teams and workloads without creating governance exposure or compromising security.

Applications connect to NeuralMesh AIDP through a standard UI and API to run semantic queries on your data. NeuralMesh AIDP handles the data layer — keeping embeddings current, managing data operations, and enforcing access controls — while your inference layer focuses on what it does best: orchestration, prompts, and responses. The two layers stay cleanly separated so neither gets in the way of the other.

Scale AI Innovation Faster With NeuralMesh

Get an inside look at the NeuralMesh ecosystem and learn how leading AI teams are

eliminating latency, maximizing GPU utilization, and cutting infrastructure costs.