HPC Applications & Real World Examples

We explain what HPC is and what applications are well suited to take advantage of HPC processing power.

What are HPC Applications? HPC Applications are specifically designed to take advantage of high-performance computing systems’ ability to process massive amounts of data and perform complex calculations at high speeds. They include use cases such as:

- Analytics for financial services

- Manufacturing

- Scientific visualization and simulation

- Genomic sequencing and medical research

- Oil & gas

What Is High-Performance Computing (HPC)?

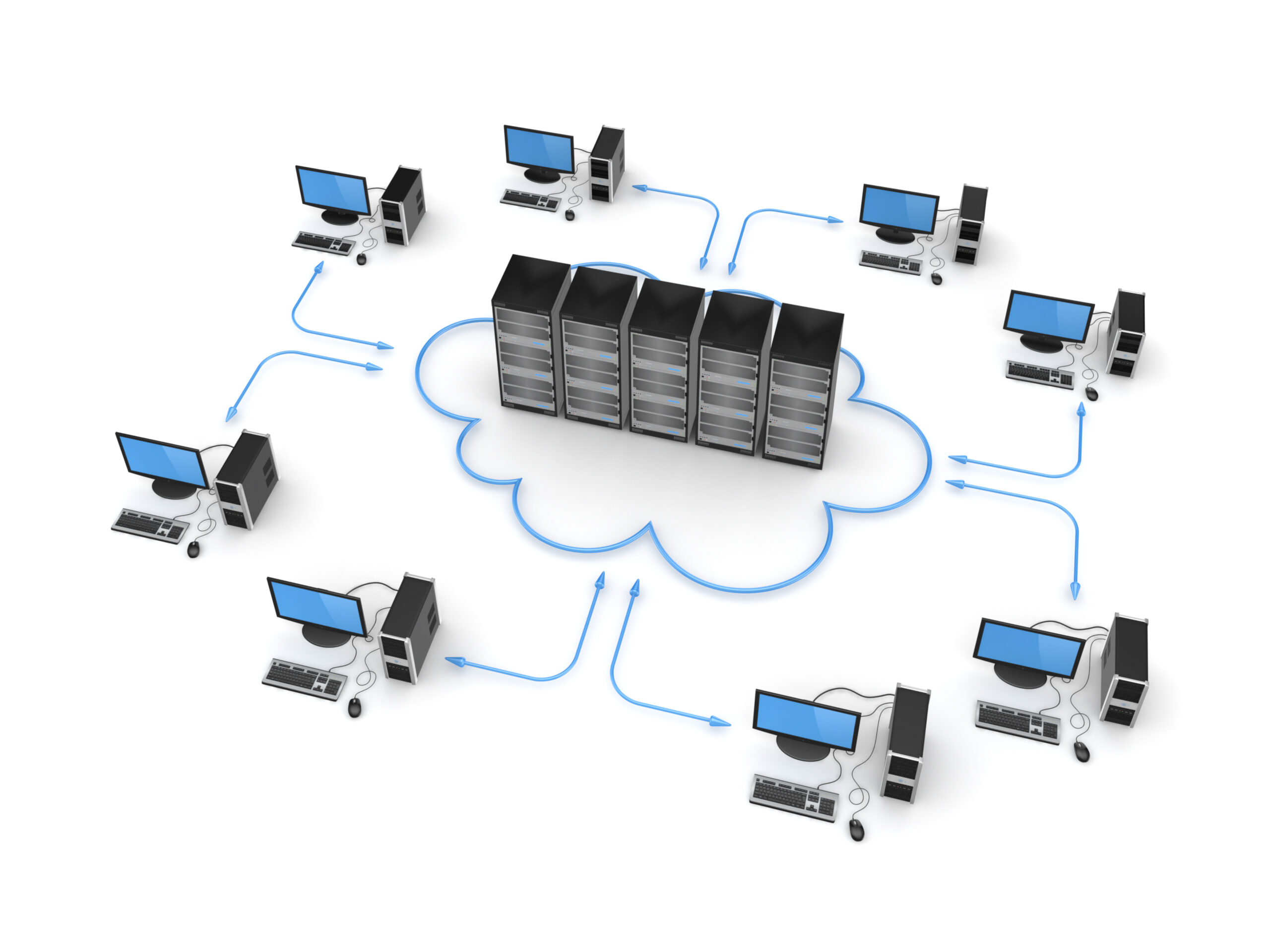

High-performance computing, or HPC, is a byproduct of cloud technology. The terminology refers to the evolution of “super” computing beyond mainframe and even local cluster computing paradigms, moving instead to clusters of hybrid or multi-cloud systems that can handle massively parallel workloads.

These clusters serve as the foundation of high-performance computing. HPC is the combination of accessible data storage, parallel processing clusters using specialty hardware configurations, and network infrastructure to support high-demand workloads.

The common components of an HPC infrastructure include:

- Computational Hardware: HPC environments typically include specialized hardware tailored for parallel computing processes and massive data throughput. These hardware components will usually include Graphical Processing Units (GPUs), Field-Programmable Gate Arrays (FPGAs) and superfast data storage (like Non-Volatile Memory Express, or NVMe).

- High-Availability Storage: A critical component of HPC is available data, and high-availability (HA) storage provides big data access to computational systems quickly and reliably.

- High-Capacity Networking: Moving data between cloud clusters or platforms requires a networking infrastructure–typically fiber optic network hardware supporting several Gbps of throughput.

This combination of technologies has led to the launch of unachievable applications even 20-30 years ago.

Use Cases for HPC Applications

With new technology comes new opportunities, which is no less true for HPC. Big tech companies have quickly jumped on the possibilities of high-performance cloud systems. As such, even relatively small businesses and end-users can leverage some of the impressive advances in AI, analytics, and application development.

However, HPC is shining as it serves large research and development enterprises across several key industries. High-performance computing is literally changing the way that many companies do business, and it is revolutionizing how scientists and engineers do research and development.

Some key areas where HPC impacts include:

Machine Learning and AI

Machine learning and AI are in themselves, but they are also technologies that touch on almost every other industry on this list.

Machine learning has been a goal of computer scientists almost since the birth of the computer. Still, limitations in hardware and software have strictly limited what was possible with general AI. HPC has changed this, and now many cloud platforms have some AI components.

How has HPC fueled growth in AI?

- Big Data Training Sets: One of the limitations of classic machine learning was the limited data to use for training. However, with the advent of cloud architecture and big data, these algorithms have terabytes of information that engineers can use to teach strategic decision-making.

- Parallel Processing: AI processing is massively parallel by design, in that ML algorithms constantly work through large quantities of data using a stable and repeating set of computations. Hardware-accelerated GPUs have made parallel processing a reality, powering cloud platforms that can push large amounts of data through learning algorithms.

- Distributed Applications: Delivery of always-on HPC applications has made AI a reality. Now, even consumer and business users can plug into AI through purpose-built analytics apps served over the web.

Financial Analytics

Let’s table discussions about online banking, chatbots, and other consumer products. When it comes to major financial firms (and even mid-size investment operations), HPC is changing the game. High-performance cloud computing fuels predictive models that inform decision-making around risk mitigation, investment, and real-time analytics.

What does HPC mean for the finance industry?

- Responsiveness: Companies can respond quickly and more accurately to changes in markets and finances.

- Resilience: HPC makes creating large system snapshots, planning redundancies, and having rapid recovery plans an actual reality.

- Intelligence: The analytics that HPC can provide for decision-makers in a financial firm far outweighs the capabilities of humans to recognize patterns and trends. Accordingly, these insights can help drive profitability and stability for almost any company.

Manufacturing

Companies deeply invested in their manufacturing operations, including supply chain logistics and process optimization, are already diving into cloud analytics and HPC to fuel even better-streamlining operations, reducing waste, and mobilizing predictive maintenance to avoid downtime.

What does HPC mean for the manufacturing industry?

- Optimization: Manufacturing processes are complex–a manufacturing line, a massive machine, a problem transporting materials, or a lengthy supply chain with thousands of stakeholders. HPC-driven AI can help engineers and operators optimize any or all of these processes. That optimization includes minimizing waste, streamlining operations, and reducing costs down to the penny.

- Predictive Maintenance: Such complex systems will also involve maintenance, which can mean downtime and lost time and money. Digital twin simulations and edge computing can help engineers prevent downtime by predicting failures or keeping regular and sane maintenance schedules for individual parts and processes.

Genomic Sequencing

Genomic sequencing is one of the most complex and resource-intensive problems in computing. Figuring out DNA sequences in organisms is already difficult enough, but the goals of decoding these sequences, eliminating genetic diseases, and developing vaccines are even more so.

Genomic sequencers are as resource-hungry as machine learning or analytic cloud platforms, if not more so. They require access to massive amounts of data, pushed through parallel processing platforms, to derive any meaningful information. Furthermore, these platforms will almost inevitably feed into HPC applications that researchers can use to make sense of these results in areas like the life sciences and pharmaceuticals.

What does HPC mean for genomic sequencing?

- Processing Acceleration: The sheer amount of data involved in even the simplest genome can lead to terabytes of information that must be processed–a daunting task best served through an HPC platform’s accelerated, parallel processing.

- Budgets: The sad fact is that even the most worthwhile research project has to navigate a budget, and that’s just as true for genomic sequencing. Properly configured and supported with strategic hybrid cloud or multi-cloud environments, large HPC systems can help cut costs on long-term processing and storage services.

Oil and Gas HPC Applications

Oil and gas is a massive, multi-billion dollar industry that spans gas stations, oil wells, deep-water platforms, and thousands of miles of pipelines and storage facilities. Needless to say, it benefits significant oil companies to optimize logistics and operations anywhere they can.

HPC has changed how these companies, and the industry as a whole, approach their supply chain. Not only are they using HPC-driven AI and analytics to cut down on waste, optimize designs for pipelines and trucking logistics, and minimize environmental impact.

What does HPC mean for the oil and gas industry?

- Cost Cutting: Every penny saved adds up, and over a global operation, this leads to millions of dollars in retained revenue. And that’s not just an overall savings… that’s millions saved in transportation, retained materials, and lost hours due to repair or errors–all that adds up.

- Environmental Considerations: Oil and gas opeations have a significant footprint, but many are looking for ways to cut that impact through optimized truck routing, migration to energy-efficient machinery, and more appropriately-managed oil and gas pipelines.

- Research and Development: new extraction techniques like seismic fracking and deep-sea exploration are leading the drive to deep stores of oil and gas. However, these approaches are exploratory, which means that the industry’s engineers, scientists, and researchers need exceptional tools to drive their efforts.

Trust WEKA as the Foundation of Your HPC

High-performance computing infrastructures aren’t just collections of hardware and networks. Effective HPC systems need a robust framework to orchestrate applications and data, manage security and errors, and streamline interactions so that users never have to know what lies underneath their tasks.

With WEKA, you can use the following features to power a robust data replication strategy:

- Streamlined and fast cloud file systems to combine multiple sources into a single high-performance computing system

- Industry-best GPUDirect performance (113 Gbps for a single DGX-2 and 162 Gbps for a single DGX A100)

- In-flight and at-rest encryption for governance, risk, and compliance requirements

- Agile access and management for edge, core, and cloud development

- Scalability up to exabytes of storage across billions of files

To learn more about WEKA high-performance cloud, contact our specialists today.