Articles

Articles

Built With AI Clouds: Multitenancy That Scales and Economics That Finally Work

How AI’s Memory Wall Is Reshaping Infrastructure Strategy Beyond GPUs

Inference Has a Memory Problem. What Comes Next?

Your Kubernetes Workloads Aren’t CPU Bound — They’re Waiting on Storage

AI Workflows in Financial Services: How Storage Makes or Breaks Your Models

Why the Infrastructure That Built Your AI Won’t Run It

What AI Infrastructure Actually Costs (And Why Most Teams Get It Wrong)

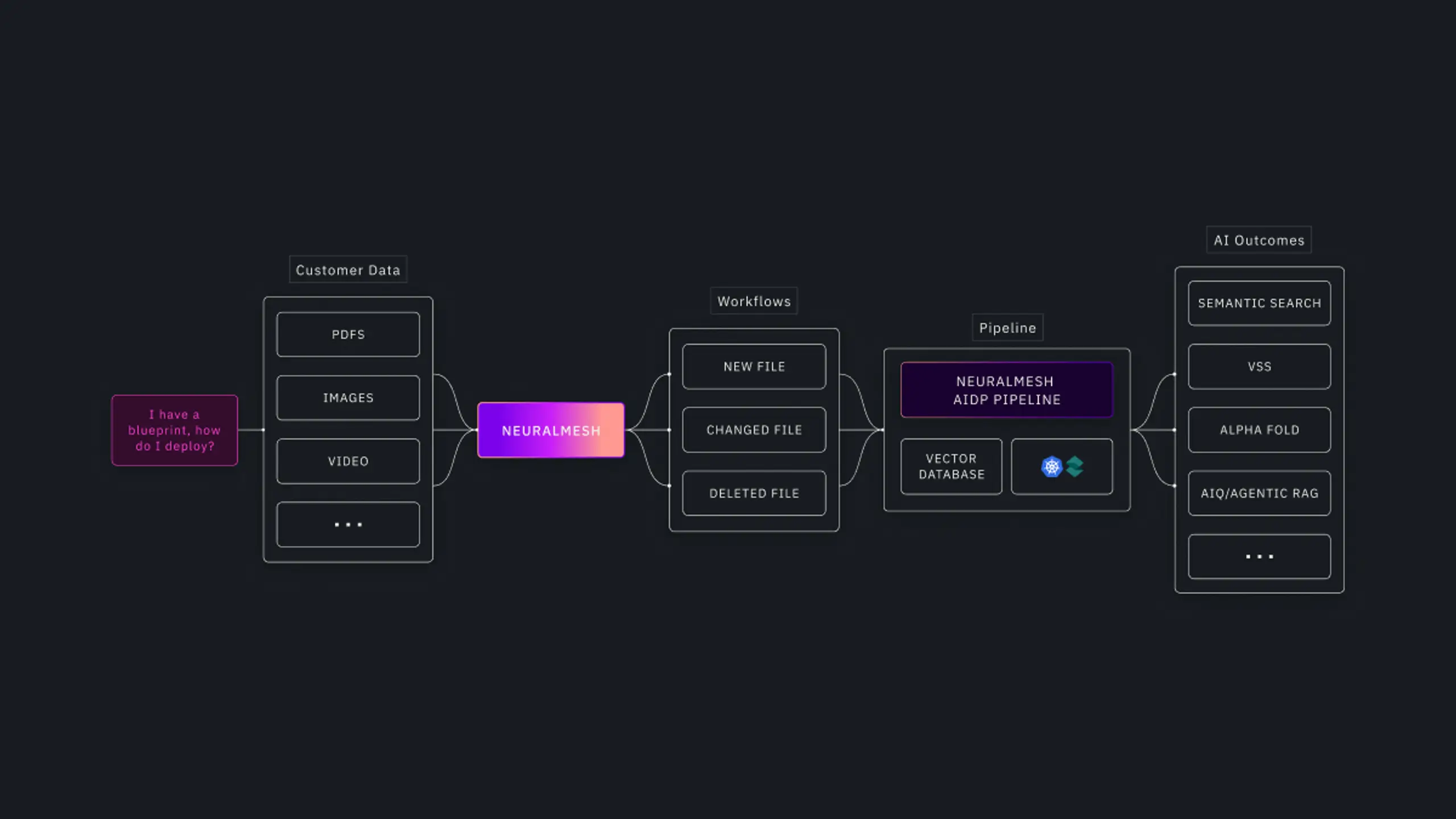

NeuralMesh AI Data Platform: Built to Operationalize AI Factories at Enterprise Scale

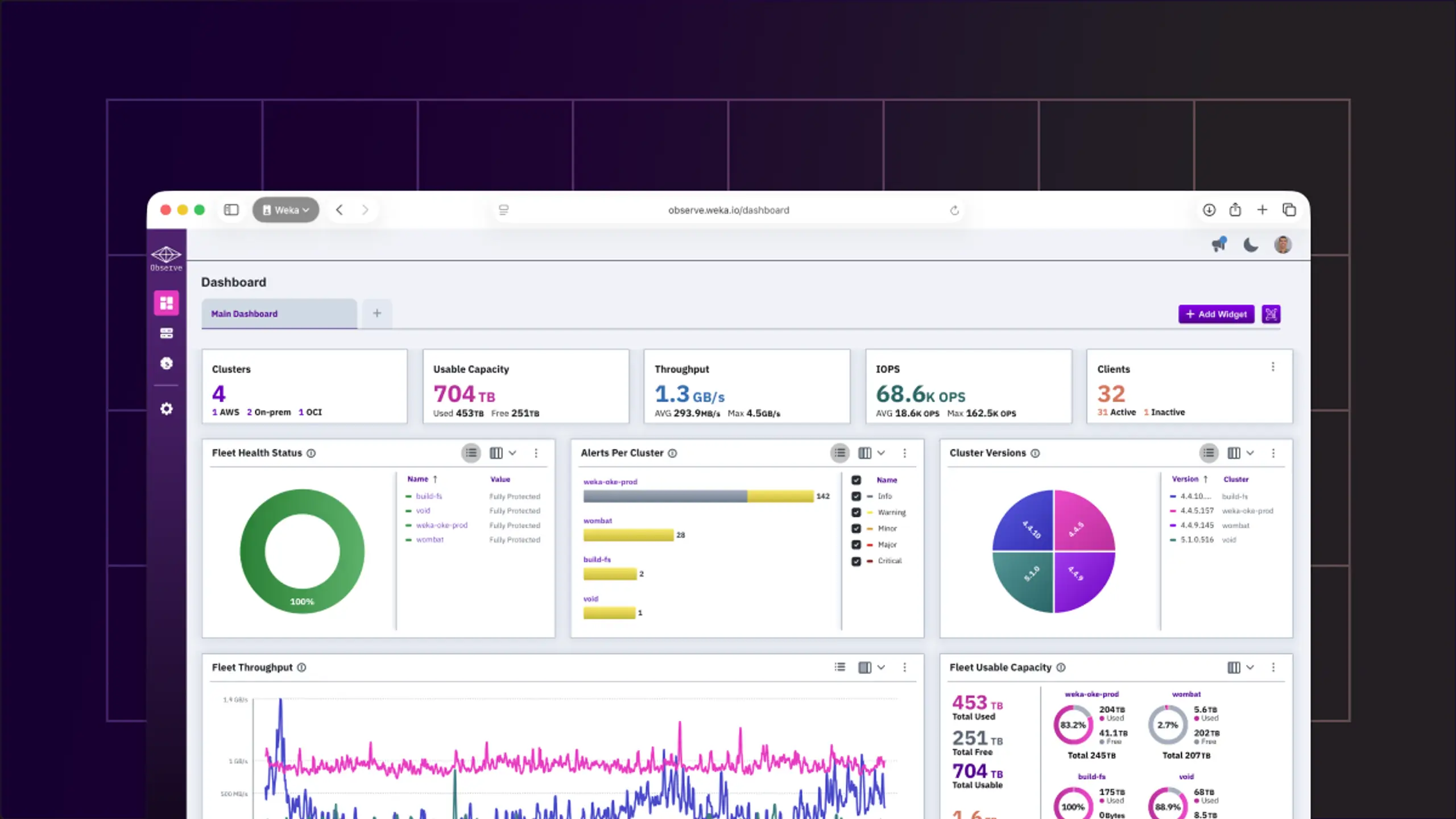

NeuralMesh Observe: Visibility and Control for Your WEKA Environment

Demystifying the BlueField-4 & Inference Context Memory Storage Announcement

Fixing the Unit Economics of AI Is Really a Memory Problem

Scale Production AI Faster with NeuralMesh

Your models aren't slow. Your data is. Fix AI bottlenecks with high-throughput infrastructure.