NeuralMesh AI Data Platform: Built to Operationalize AI Factories at Enterprise Scale

WEKA has been building using NVIDIA infrastructure for nearly a decade. NeuralMesh has validated its software-defined architecture across the NVIDIA stack so enterprises can operationalize data movement and performance at scale, not just assemble components. Today that partnership takes its next step with the announcement of NeuralMesh AIDP, purpose-built to help enterprises operationalize NVIDIA AI Factories in production.

Standing up an AI Factory is one thing. Operationalizing it is another. As enterprises move from blueprint to production, the data infrastructure underneath the compute layer becomes the critical variable. That’s the challenge WEKA’s NeuralMesh AI Data Platform (AIDP)DP was built to solve.

AI Factories Demand More From Data Infrastructures

An AI Factory isn’t a one-time deployment. It’s a continuously operating loop: data ingestion, transformation, retrieval, and inference running in parallel, at scale, around the clock. As data changes, models evolve, and demand grows, the system has to keep up without missing a beat.

Traditional data infrastructure wasn’t designed for this. It was built for home directories, backup, replication, and compliance. Many enterprises have tried to bridge the gap by assembling their own stacks—a vector database here, an ETL job there, a serving framework bolted on top. These stitched-together approaches might survive a demo. They struggle in production.

The core challenges enterprises hit when operationalizing an AI Factory come down to a few fundamental factors:

- Data changes constantly. Enterprise data lands, changes, and gets deleted nonstop and the system has to detect and ingest incrementally so information stays fresh instead of going stale.

- Data must become AI-ready, reliably. Parsing, chunking, enrichment, embeddings, metadata, and lineage have to be consistent; when this layer drifts, retrieval becomes brittle and teams end up reprocessing constantly.

- Retrieval has to stay current. Vectors and indexes drift as sources change. Without continuous updates, you either serve stale context or rebuild so often that operational stability suffers.

- Production inference depends on current context. Under multi-turn, concurrent load, stale context and drifting indexes force recompute, increase latency, and raise cost per token. Keeping enterprise data, embeddings, and retrieval current is what allows inference systems to stay responsive and efficient in production.

Storage is now a First-Class AI System Powered By NeuralMesh and NVIDIA

WEKA has been building with NVIDIA AI infrastructure through the evolution of the AI Factory. For nearly a decade, NeuralMesh has validated its software-defined architecture across the NVIDIA stack so enterprises can operationalize data movement and performance at scale, not just assemble components.

Today, storage is a first-class AI system within the AI Factory. Built on the NVIDIA AI Data Platform reference design, the NeuralMesh AIDP delivers a validated, referenceable, and deployable blueprints optimized for the enterprise. Aligned with NVIDIA reference architectures, engineered for AI performance demands, and purpose-built for AI Factory workloads, NeuralMesh now integrates more deeply than ever.

With NeuralMesh AIDP, WEKA delivers a software-defined data platform—standardizing high-performance AI data services, accelerating AI outcomes, and enabling a practical path to shared inference context.

Announcing The NeuralMesh AIDP

An AI factory needs more than fast compute. AI factories need a data platform layer that operationalizes how private enterprise data becomes usable AI context. NeuralMesh AIDP is designed to be a deployable, appliance-style system that turns NVIDIA blueprint intent into repeatable production workflows without forcing enterprises to stitch together a bespoke platform from dozens of moving parts.

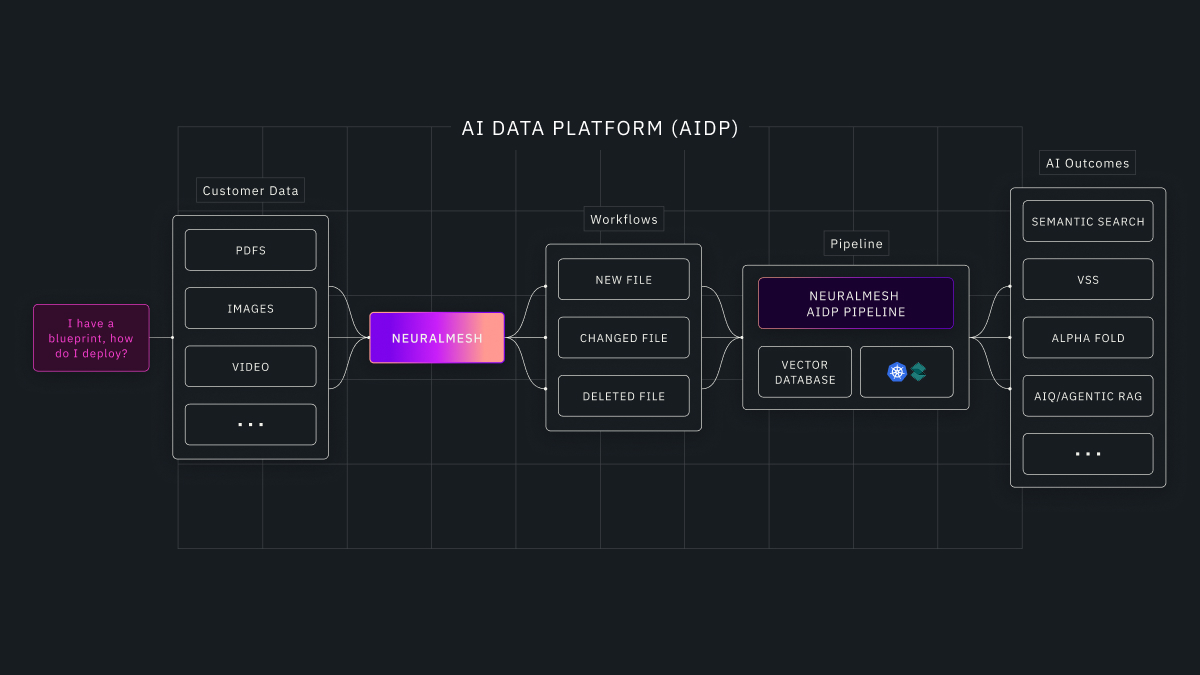

At the workflow level, an AIDP is built around a simple operational loop: enterprise data lands, changes, or is deleted and the platform keeps AI-ready representations and retrieval continuously current.

- Ingestion and change detection across enterprise sources as data is created, updated, or deleted

- AI-ready transformation through parsing, chunking, enrichment, embeddings, metadata, and lineage

- Vectorization and retrieval freshness through an integrated vector database layer that keeps indexes continuously current

- Policy-aware data readiness so security, permissions, and governance remain enforced through metadata and control frameworks

- Always-current retrieval context so semantic search and RAG systems stay aligned to source-of-truth enterprise data over time

Sensitive data often stays on-premises while compute footprints expand across private and public cloud environments. NeuralMesh AIDP provides a consistent operating model across these footprints, so teams can place AI outcomes where it makes sense without rewriting pipelines every time the environment changes.

NeuralMesh AIDP: Best-in-Class Partnerships

NeuralMesh AIDP is built with a best-in-class partner ecosystem so customers can standardize on familiar tools, preserve existing investments, and adopt the stack that best fits their environment.

Deployment and day-two operations are standardized through:

- Kubernetes orchestration via Spectro Cloud

- An integrated open-source vector database layer to avoid locking customers into a closed vector dependency

- A roadmap to extend enterprise integration with Red Hat OpenShift for consistent lifecycle management and governance across on-prem and cloud

Most importantly, NeuralMesh AIDP is delivered as a system, not a kit—validated hardware plus an integrated software stack designed to be deployed and operated like a platform.

This system includes:

- Hardware node class aligned to the NVIDIA RTX PROTM 6000 Blackwell Server Edition GPUs and NVIDIA RTX PROTM 4500 Blackwell Server Edition GPUs

- Validated configurations through Supermicro (with more to follow)

- NeuralMesh client for a unified namespace and shared data foundation

- AIDP extensions aligned with the NVIDIA’s software ecosystem (NVIDIA AI Enterprise components where applicable)

- Platform services for end-to-end ingestion, embedding, retrieval, orchestration, and day-two operations

Turning Systems into Enterprise Ready AI Factories

If you’re building an AI Factory, the takeaway is simple: NVIDIA defines the best-in-class platform and design and NeuralMesh AIDP helps you deploy and operationalize it. From always-current AI data pipelines, to shared inference context with CMX, to high-performance storage that keeps training and KV cache predictable at scale, AI factories require a modern data infrastructure like NeuralMesh AIDP.

Visit our partner page to see how WEKA and NVIDIA are building AI Factories with validated architectures, certified solutions, and the NeuralMesh ecosystem.

WEKA is here to help as you begin to design and build your AI Factory.

- Double-click into our AI Factory solution page to learn more about WEKA’s vision and the outcomes you can drive.

- Discover how you can get started with the NeuralMesh AIDP here and learn more about our NVIDIA partnership and certified solutions.

Or, check out a webinar VentureBeat hosted between WEKA and NVIDIA called, “The AI Factory Blueprint: Designing for Scalable, Efficient Inference”.