Weka AI and NVIDIA accelerate AI data pipelines

Shailesh Manjrekar, Head of AI and Strategic Alliances at WekaIO, shares how the synergy between Weka AI and NVIDIA enables edge-to-core-to cloud data pipelines to be effectively leveraged in this blog titled “Together, Weka AI and NVIDIA Accelerate and Scale Edge-to-Core-to-Cloud Data Pipelines“.

Driven by GPU-accelerated computing, faster 5G connectivity, and IoT transformation, edge AI is predicted to become larger than the cloud. Amidst this explosion of edge applications, enterprises and IT leaders are struggling to derive actionable intelligence from the data deluge, as well as operationalize and provide governance for this sensitive information. The potential of edge AI is vast. According to a report by Tractica, AI edge device shipments are expected to increase from 161.4 million units in 2018 to 2.6 billion units by 2025.

There is tremendous innovation happening with edge AI:

- Low-power AI devices and new approaches, like analog processing for edge AI inference and neuromorphic chips

- New AI frameworks – TinyML using Tensorlite

- Telcos launching 5G networks

- Edge data centers built closer to the edge endpoints

- Edge AI compilers / optimizers like NVIDIA TensorRT and AWS Sagemaker Neo

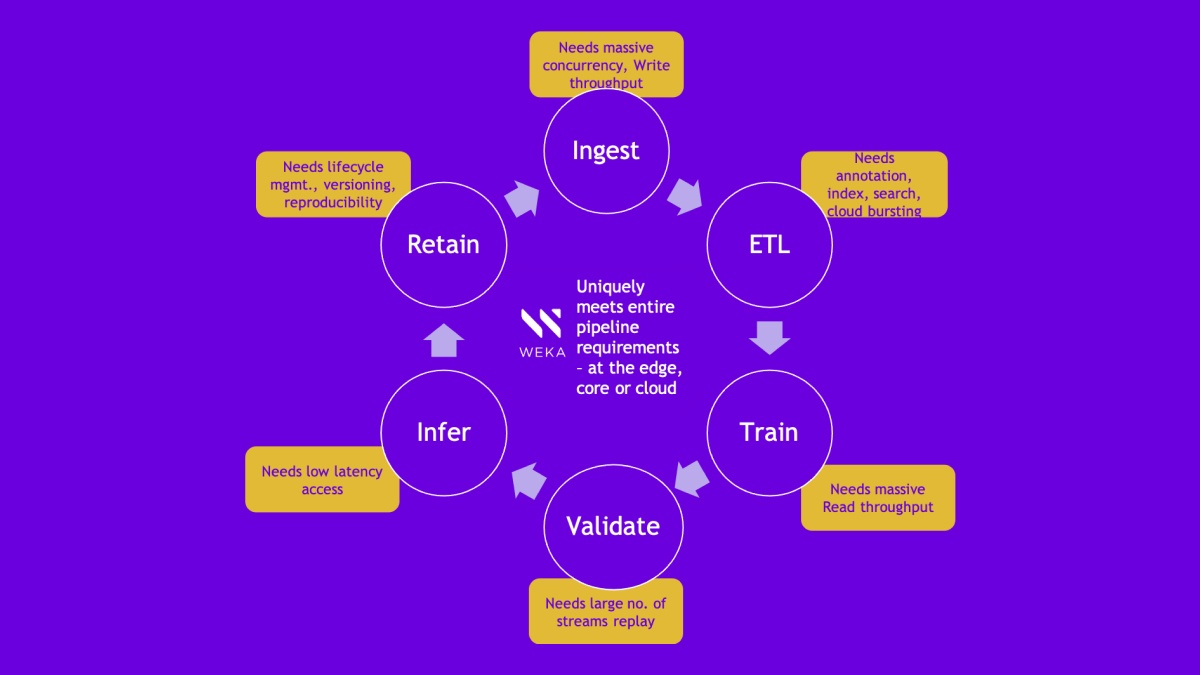

Edge to core to cloud data pipelines

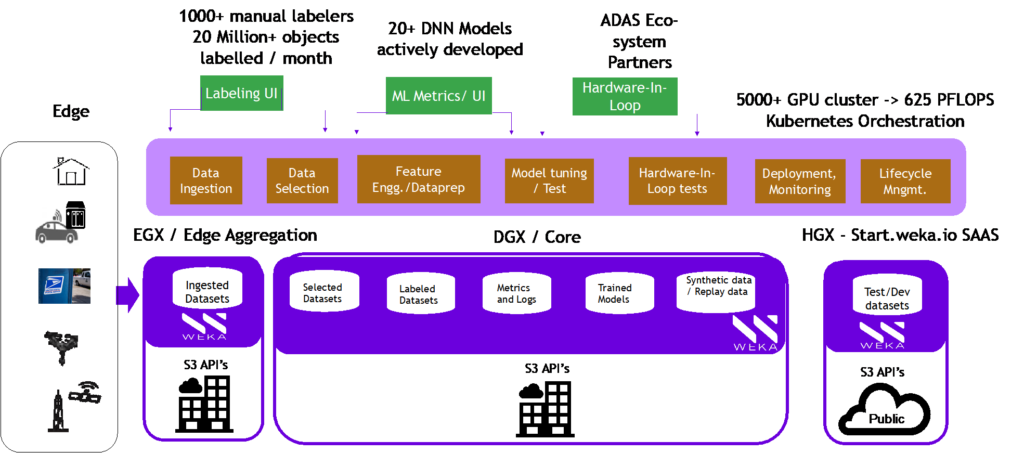

Edge endpoints range from autonomous vehicles, drones, point-of-sale devices, IP cameras or precision medical devices connected to edge servers. Edge servers are increasingly found in parking lots, post offices, radio access networks, retail stores, and edge data centers. This edge server aggregates real-time datasets, runs correlation across the various edge endpoints, performs inferencing and recommends an action all at the lowest latency and highest accuracy possible. Since inference can’t happen without training, which is too compute intensive to do locally, edge server infrastructure increasingly needs to implement an edge-to-core-to-cloud data pipeline.

WekaIO with its launch of Weka AI, a transformative solution framework, is working closely with our technology partners like NVIDIA to implement streamlined edge-to-core-to-cloud pipelines. Whether a customer is running an edge server endpoint for inference with the new NVIDIA EGX A100, doing training at the core with NVIDIA DGX-1 or DGX- 2 or running in the cloud with NVIDIA V100 or NVIDIA T4 instances, Weka AI is able to provide high performance, operational agility, end-to-end data security, and governance. Weka AI is further collaborating with NVIDIA to work with its SDKs like NVIDIA Clara for healthcare and precision medicine, NVIDIA Aerial for telcos, NVIDIA Jarvis for conversational AI, NVIDIA Isaac for robotics, and NVIDIA Metropolis for smart cities, retail, transportation and more. And now with the new NVIDIA EGX A100 converged accelerator family for edge servers featuring dual network ports for secure 100G Ethernet or InfiniBand connectivity, there are even more opportunities for collaboration to securely collect, manage, and process data at the edge.

Stay tuned for more innovation

Weka AI is bringing together the best-of-breed ecosystem to innovate edge-to-core-to-cloud pipelines. Watch this space for WekaIO’s collaboration with our technology partner NVIDIA as we launch solutions geared to meet the challenges of edge AI.

Together, Weka AI and NVIDIA accelerate and scale edge-to-core-to-cloud data pipelines!

Go to Weka AI and the NVIDIA EGX Edge Computing Platform pages for more information.

Additional Resources

GPU for AI Explained